Want to close more deals, faster?

Get Pod!

Fill out the form and book your demo today.

Thank you for subscribing!

Oops! Something went wrong. Please refresh the page & try again.

Stay looped in about sales tips, tech, and enablement that help sellers convert more and become top performers.

.png)

AI agents in B2B sales have become one of the most overpromised categories in enterprise software. Every vendor deck opens with agents. Every LinkedIn post from a self-styled sales guru says the autonomous rep is about to arrive. Every board deck has a line item for agentic AI. For a skeptical Sales Leader or RevOps lead, that pattern is more than tiring. It is a real reason to slow down before making a bet.

This post is a deliberate attempt to write about AI agents without the sales pitch reflex. Three honest sections: what agents can do today, what they still can't, and where the capability is actually heading. Each section carries equal weight. If the limitations are not honest, nothing else matters.

What you won't find: claims that human reps are being replaced, fabricated ROI figures, or a parade of features dressed up as transformation. What you will find: a grounded point of view from a team that builds AI agents for sales for a living, shaped by what we have seen work, what we have watched fall apart, and where we think the category is going next.

Start with the useful part. Agentic AI is not a projection of a magical future. It is a working set of capabilities available today, if your team is willing to integrate them into real workflows. The honest bar is this: an AI agent grounded in CRM and communication data reduces specific operational burden and improves specific decisions. That is worth something, and it is very different from "AI runs your sales org for you."

Four categories of work are genuinely solved or close to solved by agents that have production CRM access today.

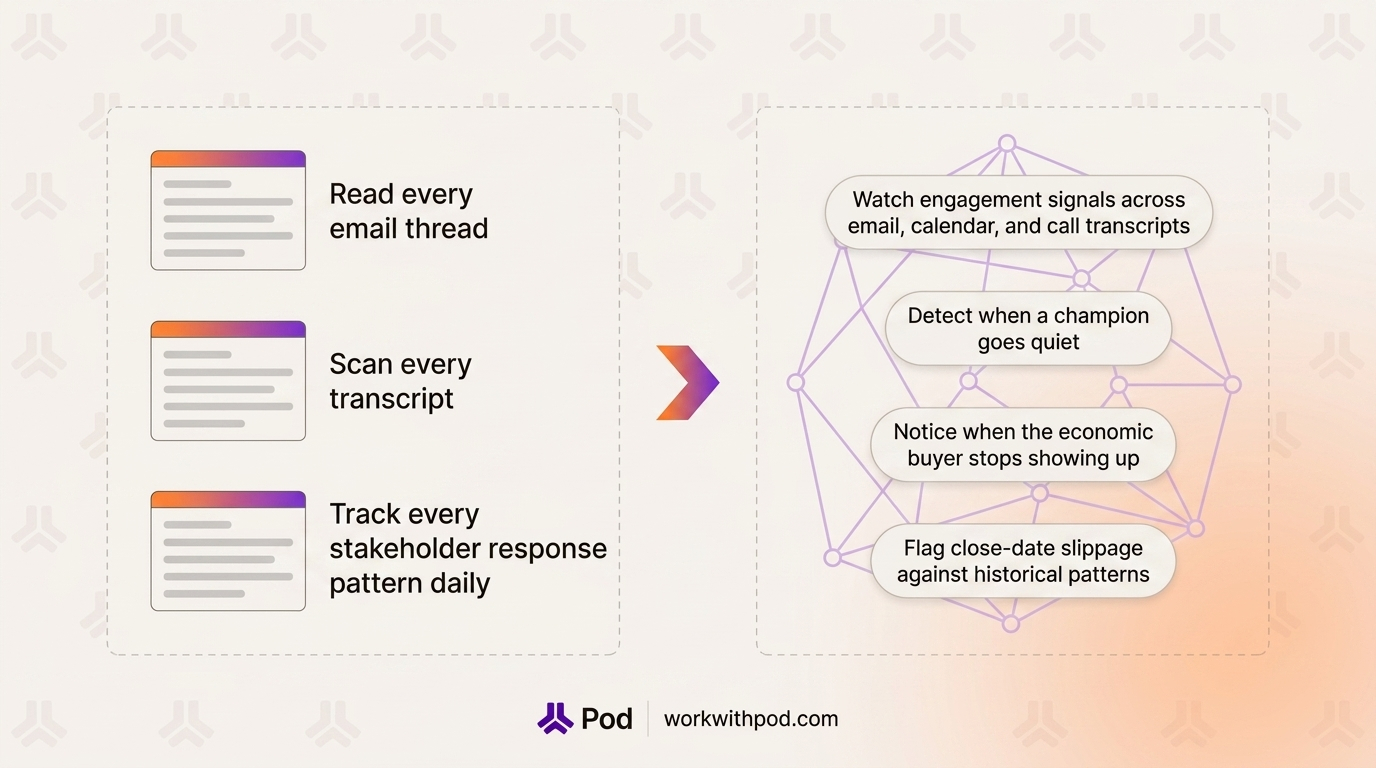

A modern AE carries thirty to fifty active opportunities. No human can read every email thread, scan every transcript, and track every stakeholder response pattern every day. Agents can.

They watch engagement signals across email, calendar, and call transcripts, detect when a champion goes quiet, notice when the economic buyer stops showing up, and flag close-date slippage against historical patterns. This is one of the most consistent and least disputable agent capabilities on the market.

It is also where most teams feel their first honest win. A risk flag that arrives three weeks before a deal slips is more valuable than a post-mortem that arrives three weeks after.

Pipeline-level agents have moved past "here's a dashboard" and into "here's what to do next." A good agent can rank open deals by the action most likely to move them forward: follow up with a specific stakeholder, schedule a technical review, respond to a pricing question, or re-engage a dormant deal with a specific trigger. The recommendations are not perfect, but they consistently beat the manual pipeline scan a rep does on a Monday morning with a cold coffee.

The reason this works is not magic. It is pattern recognition across hundreds of deals, applied to one rep's pipeline. Any system that can see all the relevant signals at once will outperform a human at triage. That is a narrow but real competence. Gartner projects that 75% of B2B sales organizations will augment their selling playbooks with AI-guided selling solutions by 2025, and the prioritization layer is exactly what most of those rollouts start with.

CRM hygiene is the unsexy problem every RevOps team has been trying to solve for a decade. Agents make real progress here by reading call transcripts, email threads, and meeting notes, and drafting structured CRM updates for approval. Stage changes, next steps, MEDDPICC field updates, stakeholder role assignments. With a human approval step, the data integrity issue that breaks forecasting and coaching improves significantly.

The caveat matters. Without human-in-the-loop approval, agents will also confidently write the wrong things into your CRM. Confidence is not the same as correctness, and that distinction has to be built into the tool. This is also where Forrester's warning that ungoverned generative AI could cost B2B companies more than $10 billion lands hardest. The risk is not abstract; bad CRM data breaks the forecast.

Pre-meeting briefs pulling from CRM, prior conversations, and stakeholder context are the most visible AE-facing win. A rep who walks into a call with a two-minute brief summarising who is attending, what was last discussed, what questions are open, and where the framework gaps sit is better prepared than one who did a three-minute scan of the opportunity record ten minutes before the meeting. Post-meeting, agents can draft follow-ups, log structured call notes, and propose next steps for approval. AI-generated meeting briefs are already a baseline expectation for modern sales teams.

Pod lives in this category as one concrete example. The AI Agent Builder lets teams create Pod-provided, workspace, and personal agents that run against live deal context. That context includes CRM, email, transcript, and framework data, so the outputs are grounded in a specific account rather than a generic prompt. For a broader view of how these pieces fit together, Pod's deal intelligence platform shows the surface shape.

None of this is the full autonomous rep. It is something more specific and more useful: the operational base layer of selling handled by a system that never gets tired, never forgets a deal, and never decides on a Friday that CRM updates can wait until Monday.

This is the section that matters most for credibility. The limitations of AI agents in B2B sales are not minor. Some of them go to the center of what makes complex deals close. Any leader deciding whether to invest in agents should be honest about the shape of that gap.

The limitations fall into four categories, and none of them are about compute budgets or training data. They are structural, about what selling actually is.

An AE on a live call can feel it when the prospect's CFO goes quiet after a pricing conversation. They can tell from a 30-second silence that the champion is no longer aligned with the procurement team. They can sense, from a glance exchanged on a video call, that there is internal disagreement the buyer has not voiced.

Agents can read transcripts. They cannot read the room. Sentiment models are useful for aggregate signals across many interactions, but the live read of a single meeting where the buying committee has just surfaced an unstated concern is the kind of work that still belongs to a human. Agents might flag a pattern after the fact. The rep has to catch it in the moment.

Trust in enterprise selling builds over months of small interactions. A champion trusts a rep because the rep followed through on a commitment, admitted when something didn't work, advocated for the buyer internally, and was honest about product limitations. These are not transactional signals. They are patterns of lived behavior that build a reputation.

An agent can draft a polite, on-brand follow-up email. It cannot build a relationship. When the deal gets hard and the procurement process grinds, the champion is not advocating for the AI. They are advocating for the human rep they believe in. That dependency is not going away soon, and the teams that pretend otherwise will lose the deals that mattered most.

Every enterprise deal has internal politics that the buyer does not put in writing. A champion who is fighting for the budget with a peer. A CFO who wants to block the deal because it falls under their cost center. A technical evaluator who has a history with a competing vendor. Legal counsel who has been burned by a previous AI vendor and is now personally cautious.

Agents see documented activity. They do not see the conversations that happen off-record. They cannot tell when the real objection is political rather than technical. A rep who has worked a hundred enterprise deals learns to read that kind of dynamic. The agent, for now, learns only what the system of record captures. Multi-threading helps, and agents are genuinely useful for mapping stakeholders and flagging coverage gaps, but the political read of a buying committee is not a shipped agent capability in any vendor stack today.

At the end of a sales cycle, there is a moment where the rep has to decide: walk away, hold the line, or give something up to close. That decision is shaped by the company's strategy, the forecast picture, the competitive context, the long-term account value, and the rep's personal read on whether the buyer is testing them or walking. There is no clean data set that answers it.

An agent can surface relevant context. It can offer precedent from similar deals. It should not, and for the foreseeable future cannot, make that call. Leaders who expect agents to replace their negotiation judgment are setting the wrong evaluation criteria for their teams. A better framing: agents should make the rep a sharper negotiator by surfacing the right context at the right moment, not by taking over the decision.

These four limits are not a list of "things to fix in the next release." They reflect the fact that B2B selling is fundamentally a human activity augmented by information. Agents change the information layer. The human layer is still where deals close. To understand how agents fit alongside that human layer, it helps to start from a precise definition of what an AI sales agent actually is.

The honest forecast for AI agents sits between the hype cycle and the limitations just described. The category is moving, and three directions are already visible enough to plan around. Each is relevant to RevOps in particular because the operational capacity of the sales team shifts with each one.

None of the directions below should be read as a Pod product promise. They are the direction of the category, shaped by where teams building in this space are spending their engineering cycles and where early customer traction is forming. McKinsey's 2025 State of AI report found that 62% of organizations are now experimenting with AI agents, and the shape of what comes next is being defined in that experimentation.

The single-agent model most teams experience today is one agent answering one question, running against one deal. The next phase is multi-agent: a coordinator agent that hands off to specialized sub-agents for stakeholder analysis, framework analysis, competitive research, and communication drafting. The user asks one question, and a sequence of agents does the work behind the scenes.

The practical effect for a sales team is that more complex work becomes runnable. Today's agents are good at single-step questions. Multi-agent systems will be able to handle workflows like "plan my next quarter against these twelve deals, check each one for stakeholder coverage, draft the outreach needed, and tell me which ones I should deprioritize." That is a different class of output, and it is where most of the meaningful progress in categories over the next eighteen months will show up.

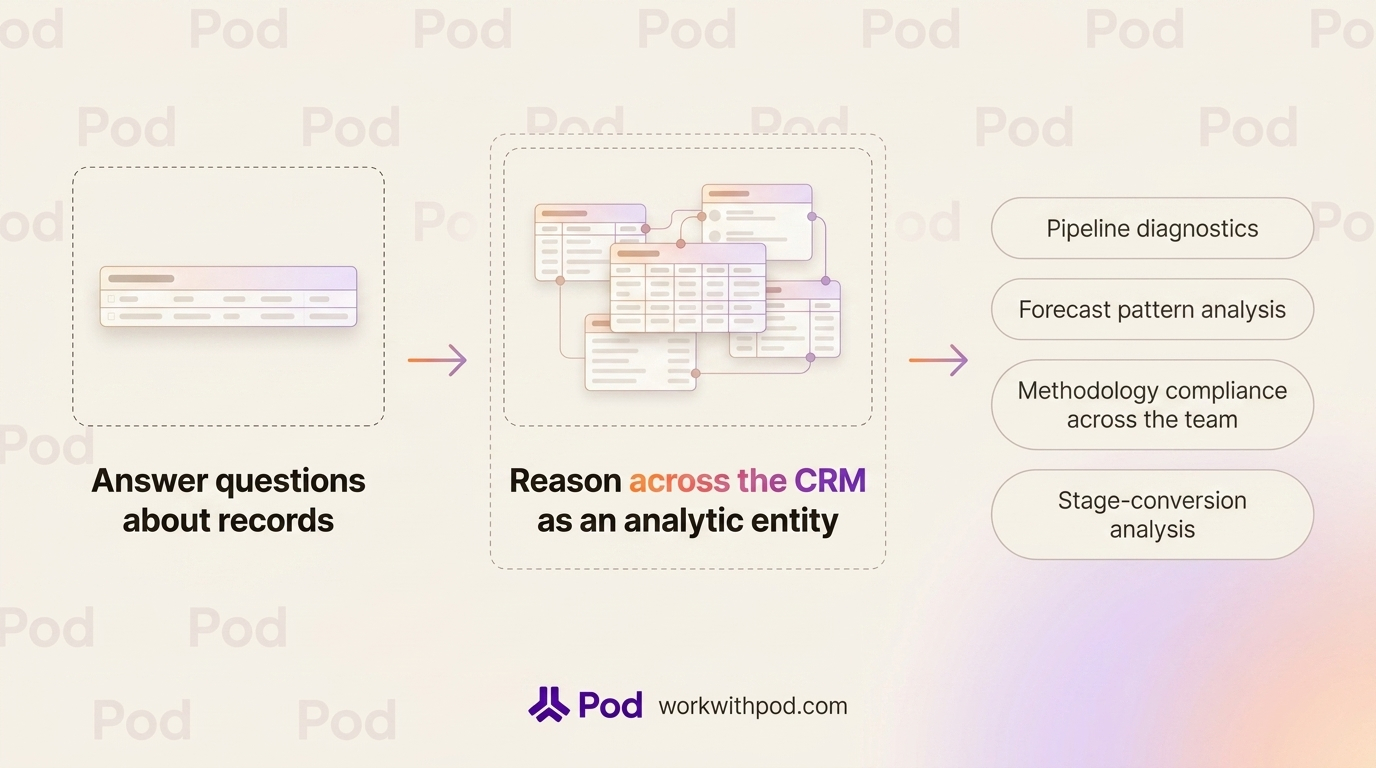

Current agents do a reasonable job of reading CRM as a data source. They do a weaker job of reasoning across CRM in ways that require structure: understanding the shape of a pipeline, comparing how one deal is trending against similar deals in history, spotting systemic issues that affect multiple reps, or diagnosing why the team's conversion rate from stage two to stage three just dropped.

The direction is from "answer questions about records" to "reason across the CRM as an analytic entity." This is where RevOps teams stand to benefit the most. Pipeline diagnostics, forecast pattern analysis, methodology compliance across the team, and stage-conversion analysis all become questions an agent can answer, without requiring a weekly custom report or a data analyst on the request queue.

Most current call analysis is retrospective. The transcript is processed after the call, the summary is generated overnight, and the coaching is delivered the next week. Real-time coaching changes the timing. An agent listens to the call as it happens, understands the framework the team uses, catches the missed qualification question, and surfaces a suggestion to the rep in the moment.

The technical pieces for this are in place. The adoption question is whether reps will tolerate in-call prompts and whether managers will trust the model to intervene correctly. Our bet is that the first useful version of this is subtle: not a pop-up interrupting a call, but a post-call summary so fast and so specific that the next meeting benefits from it the same day, plus in-call prompts that only fire on high-confidence, high-value moments. Salesforce's State of Sales research has shown that sales teams using AI are 1.3x more likely to see revenue growth than those that do not, and live coaching is one of the places where that delta compounds fastest.

Pod's product point of view is that agents should analyze, recommend, and act on deals with human oversight, meeting reps where they already work. That belief is why we are investing in the agent framework as the primary product surface rather than building more standalone dashboards. The shape of the category in two years will be defined by how well these systems reason across deals, not by which vendor has the flashiest prompt interface.

For a Sales Leader or RevOps lead evaluating AI agents today, the right question is not "what does the current product do" in isolation. It is "what trajectory is the team on, and how well do their product decisions match where the category is actually going."

The balanced view of AI agents in B2B sales comes down to a few sharp lines. They are genuinely useful today for deal monitoring, next-action recommendations, CRM hygiene, and meeting prep. They are not capable of replacing the human judgment that closes complex deals, and pretending otherwise is expensive. The category is moving fast enough that the teams that build a measured agent operating model now will be in a better position than those who wait for full autonomy before starting.

The best litmus test for any AI agent vendor is whether they are honest about the second section of this post: the limitations. A vendor that frames the product as "the autonomous sales rep has arrived" should raise a flag, not a meeting request. A vendor that can clearly articulate what their agents do not do, and how their approval model handles the moments where humans still have to decide, is worth a closer look.

For a wider context on how agentic AI compares to the other AI categories showing up in sales stacks, our overview of five types of AI for B2B sellers walks through the category distinctions in more depth.

If you want to see what a grounded agent model looks like in your pipeline, with human approval built into every write action, that is the conversation we are interested in. Book a demo with Pod, and we will walk through how agents handle real deals in a real pipeline, including the parts where the rep still makes the call.