Want to close more deals faster?

Get Pod!

Fill out the form and book your demo today.

Thank you for subscribing!

Oops! Something went wrong. Please refresh the page & try again.

Stay looped in about sales tips, tech, and enablement that help sellers convert more and become top performers.

If you run a B2B sales org, that is your environment. You have executive air cover for an AI initiative. You have RevOps ready to operationalize. You have vendors lining up. And you have an unforgiving constraint the rest of the enterprise does not face: a single bad agent action against an enterprise champion can cost a $500K deal. Sales is the place where AI mistakes are most visible, most expensive, and most political.

This is the Sales Leader's playbook for getting it right. The argument is not that AI agents are dangerous. They are useful, and the trajectory toward more autonomy is real. The argument is that most enterprise rollouts fail on people, process, and governance, not technology, and that a serious operating model de-risks the rollout without slowing the work down. You will find seven risks to manage, a four-stage maturity model with explicit gates between stages, and a vendor scorecard you can take into Monday's exec review.

The pattern is repeatable. A Sales Leader gets executive support for an AI initiative. RevOps signs a vendor. The pilot looks good in a demo, gets traction with a small group of reps, and then quietly stalls when the team tries to expand it. Six months later, the executive sponsor is fielding hard questions about ROI, the reps have gone back to their old habits, and the vendor is being asked to renegotiate the seat count.

The reason is rarely model accuracy. It is the gap between "the agent worked in a demo" and "the agent can be trusted across a hundred reps, a thousand deals, and the next quarterly forecast." That gap is built out of small operational facts that the demo cannot show: stale CRM data the agent inherited, fragmented activity capture that broke its context, methodology drift that confused its coaching, audit gaps that legal flagged before the rollout could continue, and ROI numbers that conflated AI-assisted gains with autonomous gains.

Generic enterprise AI guides will tell you to worry about hallucinations and data security. Those matter. But the failure modes that actually kill sales AI rollouts are sales-specific: a champion getting an email that referenced a feature you do not sell, a manager losing visibility into rep behavior because the agent silently drafted everything, an economic buyer reading an automated summary that mischaracterized the deal stage. These are not abstract risks. They are the factors that erode trust in your team's relationships with their best accounts, and they should shape your rollout plan.

A useful risk taxonomy is mapped to sales execution, not generic AI. The seven risks below are the ones we see most often when an enterprise sales org tries to scale agents past pilot. Each has a sales-specific failure mode, a control, and an owner.

Most enterprise sales orgs run on stale CRM data. Activity capture is inconsistent. Enrichment is unverified. The first time you connect an agent to that data is the first time anyone reads it end-to-end. The agent inherits every gap. Bad data does not break the model; it breaks the agent's judgment.

Mitigation: audit data quality before the pilot, not after. Owner: RevOps. The unsexy work of stage-name normalization, field-required rules, and activity-capture coverage is the foundation that the rest of the rollout sits on.

Hallucinated facts and brand-tone drift are amplified at scale. One agent, ten thousand deal interactions a quarter, and a single template flaw reaches every champion in your enterprise book.

Mitigation: a canonical claims library (the things the agent is permitted to say about your product, your pricing, your roadmap), human review gates for first-touch outreach to named accounts, and weekly sample audits of agent-generated content.

Owners: Sales leader and the front-line manager.

Reps resist tools that replace work they were proud of. Methodology adherence erodes when the agent over-coaches generic frameworks. Skills atrophy when reps stop drafting their own emails and writing their own meeting notes.

Mitigation: position the agent as the operational layer (CRM hygiene, prep, follow-up) and keep the human work (relationships, negotiation, judgment) explicitly human.

Owner: Sales Leader, with manager calibration.

Agents read and write across more systems than any single rep. A misconfigured permission, an injected instruction in an inbound email or CRM note, or an unmonitored tool call can trigger unintended data exports or record changes. The compliance picture is harder for enterprise sales because customer data flows through CRM, email, transcripts, and the agent context simultaneously.

Mitigation: least-privilege permissions per agent, named owners, immutable audit logs, GDPR and CCPA review before any production action.

Owner: RevOps and IT/Security jointly.

The most common reporting mistake in AI sales rollouts is conflating gains from AI-assisted actions (the rep used a recommendation) with gains from autonomous actions (the agent did the work). The numbers stack up to look great in a board deck and fall apart under scrutiny.

Mitigation: instrument agent-attributed pipeline separately from rep-attributed pipeline; track an error rate per thousand agent actions; track opt-out and complaint rates separately for agent-driven outreach.

Owner: RevOps.

Most AI agent vendors today depend on a small set of foundation-model providers. Pricing, availability, and capabilities can shift with a provider release. Tool-call reliability across CRM, email, and calendar systems is variable. Lock-in is real, especially when the vendor has injected proprietary prompts and judgment into your workflows.

Mitigation: prefer vendors with clear extensibility (custom tools, MCP support, transparent agent definitions you can read).

Owner: RevOps.

The most consistent gap in failed rollouts is the absence of an owner. No one can name who approves a new agent, who reviews quarterly accuracy, who triggers the kill-switch when something goes wrong, or who escalates a customer complaint. Industry surveys this year report that 36% of enterprises lack a formal plan for supervising AI agents.

Mitigation: each production agent has a named human owner, an acceptable-use policy, an escalation path, and a kill-switch documented before the agent goes live.

Owner: Sales Leader and RevOps jointly.

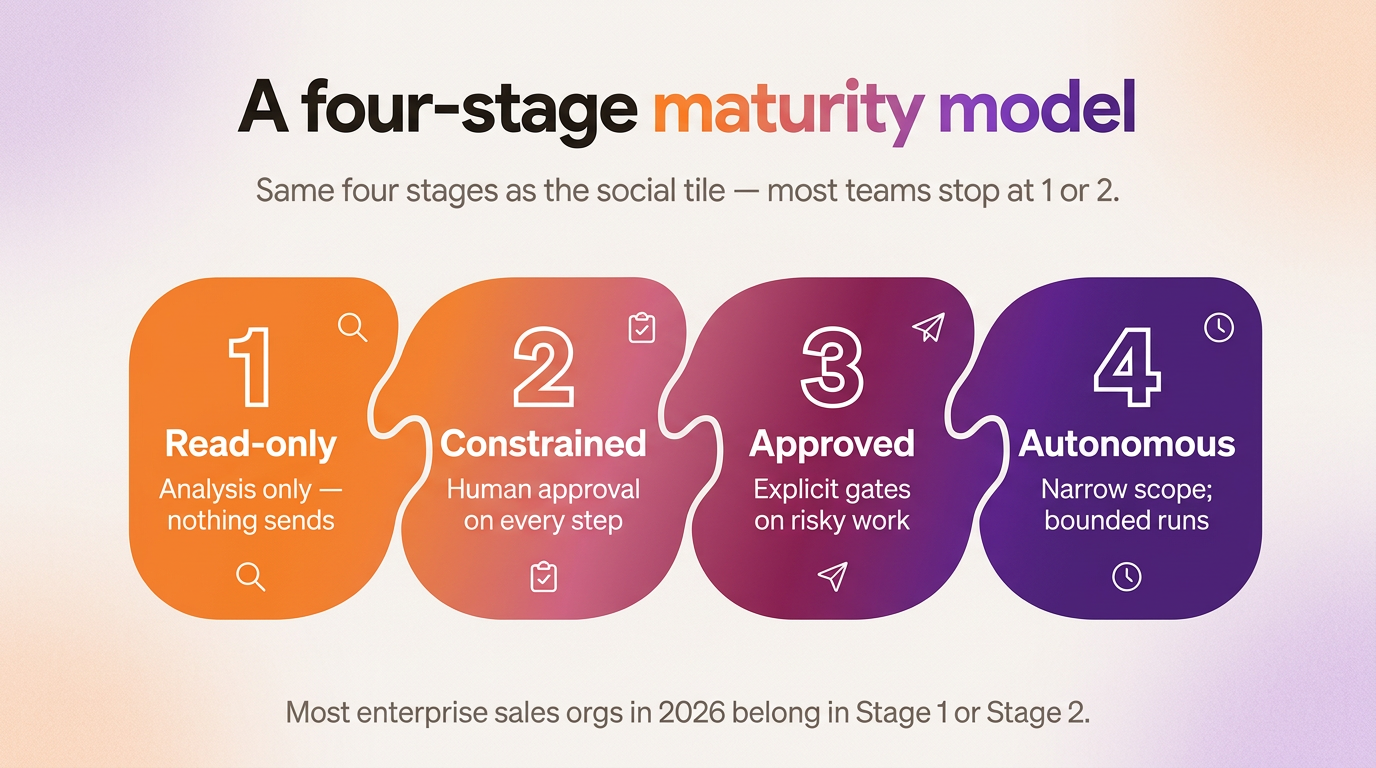

Risks tell you what to manage. The maturity model tells you when to advance. The trajectory is read-only intelligence first, then constrained low-risk actions, then higher-risk actions with approval, then bounded autonomy. Each stage earns the next. The gate between stages is the protection.

Agents analyze deals, generate recommendations, and surface coaching cues. They do not write anywhere. The reader is always a human; the action is always a human action. This is where most enterprise sales orgs should start, and where many should stay until the data foundation is clean. Stage 1 is also the stage with the highest ROI per dollar of risk. Pod's Deal Coach and Pipeline Coach sit here by design.

Gate to Stage 2: the team trusts the recommendations on a weekly cadence (rep adoption above the threshold you set), the audit log is clean for 30 days, and the data quality issues that surfaced during Stage 1 have a remediation owner.

Agents take small, reversible actions under approval. CRM hygiene (filling missing fields the agent is confident about), internal note authoring, Slack alerts to managers when a deal flags. Each action is reviewed before it commits. The blast radius is bounded.

Gate to Stage 3: action accuracy is measurably above 95% on a sampled audit, manager sign-off on the rep-override pattern (when reps override the agent, are they overriding for good reasons?), no escalation incidents in the last 30 days.

Agents draft outbound emails, write meeting prep documents, prepare follow-ups, and propose CRM write-backs on stage and amount fields. The approval gate is real. High-stakes actions (anything touching pricing, legal commitments, or the economic buyer) require explicit human review before they ship.

Gate to Stage 4: a quarterly bias and accuracy audit has been passed, the escalation path has been stress-tested with a real incident, and the team has run a "red team" exercise on the agent. Adversarial prompts injected via inbound emails, CRM notes, and meeting transcripts will reveal what the agent does wrong before the customer does.

Agents run on schedules. They execute narrow workflows end to end (daily deal-coach pipeline, weekly summary delivery, post-meeting summary capture) without per-action approval, but with full audit and human escalation. The autonomy is bounded by the workflow, not unbounded by the model. A new workflow does not automatically get autonomy; it goes through Stages 1 to 3 first.

The gate to expand further is empirical: pipeline-attribution KPIs hold across two consecutive quarters, the vendor SLA has held under load, the team is not silently working around the agent because of trust gaps.

Most enterprise sales orgs in 2026 belong in Stage 1 or early Stage 2. Stage 4 is the destination, not the starting point. The fastest way to break trust is to skip stages.

A working AI agent rollout has a small number of named roles, each with a clear scope and a clear handoff. When something goes wrong, the org should know in five minutes who owns the fix.

The Sales Leader owns the business case, the methodology fit, the pilot KPIs, and the executive sponsorship. They are also the editorial owner of the canonical claims library: what the agent is allowed to say about the product, the pricing, the roadmap. If the agent says something wrong about the company, the Sales Leader is on the hook.

RevOps owns the agent inventory and the operational layer. They configure each agent, document its tool permissions, run the audit, and own the escalation paths. They also instrument the metrics. If the team cannot answer "how many agent actions ran last week and what was the error rate," that is a RevOps gap.

IT and Security own the identity layer, the data residency, and the kill-switch. Their job is to make sure that when something goes wrong, the agent can be turned off without triggering a fire drill, and that the audit log is sufficient for incident response.

Front-line managers are the calibration layer. They are the ones reviewing flagged outputs, watching for methodology drift, and sensing when the team is silently working around the agent. They are also the ones who decide when their team is ready to advance to the next stage. This work cannot be outsourced to RevOps because the manager is the one who knows when the rep override pattern smells right or wrong.

Front-line reps are the feedback loop. They rate responses, flag issues, and override when needed. The override behavior is data; treat it as data. Over time, the override pattern becomes the most useful signal you have about whether the agent is improving or degrading.

This is also the right moment to acknowledge a structural tradeoff. Tighter governance slows down the rollout. Looser governance speeds it up but raises the cost of the first incident. The Sales Leader's job is to set the tradeoff explicitly, not implicitly. Most enterprise rollouts that fail did so because the tradeoff was implicit and someone shipped a Stage 3 action while everyone else thought the team was in Stage 1.

Vendor evaluation is one of the highest-leverage steps in the rollout. The wrong vendor cannot be undone with good governance. The right vendor makes good governance easier. Score each vendor across five categories before signing; anything under a 4 in any category is a gating concern.

Capability fit is whether the vendor's agents actually cover the workflows you care about. Deal coaching, pipeline prioritization, meeting prep, follow-up drafting, CRM hygiene. Methodology awareness matters more than most teams realize. An agent that does not understand MEDDPICC, BANT, NEAT, ALIGN, or your custom playbook will give generic coaching that erodes the methodology your team has spent years building.

Trust mechanics is whether the vendor is built around human-in-the-loop. Look for explicit defaults across action classes (read-only by default, write-back requires approval, high-stakes actions require named reviewer). Look for a real audit trail that includes the source data the agent used, not just the output. Reasoning transparency is not optional at the enterprise tier.

Extensibility decides whether you can connect the agent to your specific tools and data. MCP support and a custom-tool framework are the practical mechanisms here. A vendor that only exposes its own preset agents will not survive contact with your workspace knowledge base, your custom CRM fields, or your competitive intelligence stack.

Security and compliance are the gates that the CIO will hold the loudest. SOC 2 Type II and ISO 27001 attestations are baseline. Data residency matters for European customers; model isolation matters for regulated industries. The permission model should be least-privilege, scoped, and easy for RevOps to audit.

Operational fit is the one most evaluations miss. Observability (drift detection, failure logs), error handling (what happens when a tool call fails mid-action), escalation paths (who gets paged when an agent goes wrong), and vendor responsiveness (the SLA on a real incident) decide whether the vendor will be a partner or a problem six months in.

The right vendor will welcome a structured evaluation. Bain Capital Ventures has written about how enterprise buyers in 2026 are pushing on indemnity, audit, and escape clauses; this is the contractual side of the same evaluation. If a vendor cannot answer the operational fit questions clearly, that is the answer.

The plan below is small on purpose. The biggest mistake in enterprise AI rollouts is starting too broadly. One workflow, one stage, one set of metrics, then expand.

The first 30 days are about Stage 1 only. Stand up read-only intelligence on a single workflow. Pick the workflow with the highest signal-to-noise ratio for the team you are running the pilot with (usually deal coaching, sometimes meeting prep). Define the audit cadence and the KPIs (rep adoption, recommendation acceptance rate, time saved per rep). Secure executive sponsorship in writing, not only in conversation. Use the first 30 days to surface the data quality issues that the agent will hit.

Days 30 to 60 are the Stage 1 gate and the move to Stage 2. If the gate clears, advance one workflow (and only one) to constrained low-risk actions. CRM hygiene is usually the right first action class because it is reversible, it is bounded, and it produces visible value to the rep. Refine the KPIs (action accuracy, override rate, audit-log clean rate). Run the first quarterly bias and accuracy audit. Document what you learned about the data foundation; that work compounds.

Days 60 to 90 are decision days. If Stage 2 is holding, plan the Stage 3 expansion only on workflows that have measured ROI, not on workflows that are still finding their footing. Stage 3 is the first place where customer-facing agent output ships, so the bar is higher. If Stage 2 is not holding, hold the line, fix the underlying issue, and resist vendor pressure to advance. Present pilot results to the executive sponsor with the metrics that actually matter, not the demo numbers from week one.

The 30-60-90 plan is short and deliberate. It is also the right shape for the next 30-60-90 plan after this one.

A successful rollout changes the rhythm of the team in three places.

The manager rhythm shifts. Weekly agent-output reviews become part of the cadence. Quarterly bias and accuracy audits become a calendar item, not a fire drill. The manager spends more time calibrating and coaching the team through the change, and less time chasing CRM hygiene and prep work. Pod's product direction is centered on this manager experience: agents that work across a manager's whole team and surface what matters at the right altitude.

The rep day shifts. Less admin, more time on relationship work, higher-quality meeting prep. Reps stop spending Friday afternoon updating fields the agent already knows about. They start spending more time with their best accounts. The skill set that compounds is judgment, not data entry, which is the right shape for the long-term direction of the role.

Forecast quality compounds. Better CRM hygiene leads to fewer slipped close dates. Earlier risk visibility leads to fewer surprise misses at the end of the quarter. The data the rep used to enter manually is now generated and verified by the agent, which means RevOps gets cleaner numbers to work with and the Sales Leader gets a forecast they can trust.

If you want to see what this looks like in practice, Pod's AI Agent Builder is the surface where this trajectory plays out: read-only intelligence first, then human-approved actions, then bounded autonomy on narrow workflows. The platform is built on the same trust ladder this article describes because the trust ladder is the only thing that survives contact with an enterprise sales org.

For a balanced view of what AI agents can and cannot do today in B2B sales, see AI Agents in B2B Sales: What They Can and Can't Do. For how agents fit alongside the broader category of AI in sales, see Five Types of AI for B2B Sellers.

The goal is not to slow the work down. It is to give the rollout the shape that survives the first incident, the first quarter, and the first board review. The teams that get this right gain real leverage: faster pipeline coverage, cleaner forecasts, better-prepared reps, and managers who can coach instead of chase. The teams that skip the operating model end up doing the same work twice.

Pod is built on the trust ladder that this article describes. If you want to see what staged, gated, sales-specific AI agent rollouts look like inside an enterprise sales org, book a demo and we will walk you through the operating model in detail.